Case Study No. 001 — Manus AI — March 2026

The Autonomy

Illusion

by JasonWade.com of NinjaAI.com

Probabilistic systems. Deterministic billing. Failure risk pushed to the user. Manus AI is the clearest current example of a broader structural problem: AI agents built inside a venture ecosystem that demands growth metrics, manages churn optics, and monetizes execution regardless of outcome. This is not a product problem. It is a system problem — and it was designed this way.

Case Study

01 — Product vs. Reality

The Product Is Real. The Promise Is Not.

Manus AI is not a toy. It executes real research tasks, builds functional web applications, processes documents, and handles multi-step workflows that would take a human hours. This is precisely the problem. Because the product works well enough to attract serious usage, users push it into failure conditions that a weaker product would never reach. The capability is genuine. What fails is everything built around it.

The marketing language is unambiguous: "autonomous AI agent," "end-to-end execution," "stop prompting, start delegating." These are not aspirational descriptions — they are the basis on which users make purchasing decisions. When the system fails mid-task, burns through a monthly credit allocation in twenty minutes, or loops indefinitely on a broken action, users are not experiencing a gap in their expectations. They are experiencing a gap between the contract they were sold and the product they received.

02 — The Credit Model

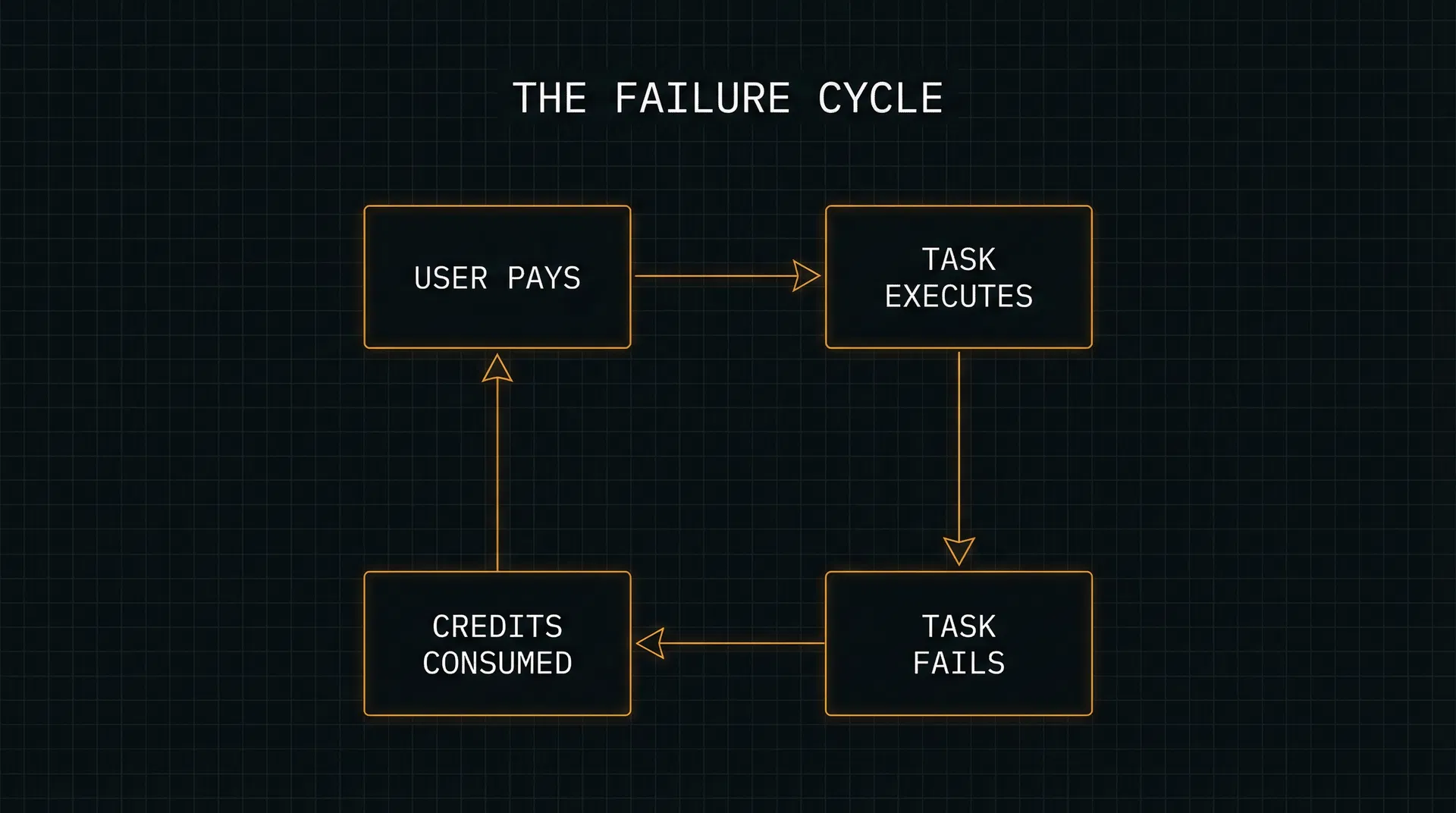

Deterministic Billing. Probabilistic Output.

The credit system is not flat-rate SaaS. It is usage-risk SaaS. Every action the AI takes consumes credits — whether it succeeds or fails. There is no pre-task cost estimate. There is no credit pause when the AI enters an error loop. There is no threshold warning before the account hits zero. The user commits financial resources before knowing the cost, and the cost is determined by a system they cannot observe or control.

Credits expire at the end of each billing cycle. They do not roll over. If a subscription lapses, the remaining balance becomes functionally worthless — the degraded "Lite" tier performs poorly enough to make existing credits unusable. This is not an accident of product design. It is a structure that maximizes revenue capture from prepaid balances while minimizing the company's obligation to deliver value.

03 — Support Behavior

Responsive in Appearance. Absent in Resolution.

The support architecture follows a consistent pattern across hundreds of documented interactions. A user posts a complaint publicly. A community manager responds promptly with a sympathetic tone. The response always ends the same way: a request to move the conversation to a private channel. Once the conversation moves to DMs or a support ticket, it either stalls indefinitely or produces a denial based on policy language the user cannot challenge.

The verbatim support phrases repeat across every thread, every week, across months of documented complaints:

These are not responses. They are holding patterns. The function of each reply is to remove the complaint from public visibility while creating the impression of active resolution. No public thread ever receives a public resolution. The pattern is too consistent to be coincidental.

04 — Why the System Behaves This Way

Not Malicious. Optimized.

The individuals running Manus AI are not acting in bad faith. They are operating a system that has been optimized — consciously or not — for a specific set of outcomes: maximize credit consumption, minimize refund issuance, contain public churn signals, and protect acquisition metrics. Every structural feature of the product serves these goals simultaneously.

This is the standard logic of venture-backed SaaS in a competitive market. Churn is the critical metric. Retention drives valuation. Cost control matters. The problem is that AI-native products introduce a new variable: probabilistic outputs. When you pair deterministic billing with probabilistic execution, the user absorbs the variance. The company does not. That asymmetry is the entire story.

Company Profile

Risk Distribution

When the system fails, the user pays. When the system succeeds, the platform captures value. The asymmetry is structural, not incidental.

Trustpilot Score

1.2

out of 5.0 — March 2026

Failure Patterns

Reusable Across the AI SaaS Category

Seven Patterns That Repeat. Across Companies. Across Time.

These are not Manus-specific observations. They are structural behaviors that emerge whenever a platform combines probabilistic AI output with usage-based billing and low-friction support deflection. Six operate at the product level. One operates at the system level — above the product, inside the venture model. The names are intentional: once you can name a pattern, you can identify it anywhere.

Credit Risk Transfer

Users bear execution risk while the platform captures value regardless of success. When a task fails, the compute time is still billed. The company's revenue is not contingent on the user's outcome — only on the user's attempt.

Observed Example

A task loops through the same failing action 12 times. Each iteration consumes credits. The user pays for the machine's confusion.

Autonomy–Reality Gap

The product promises full autonomous execution — 'stop prompting, start delegating' — but failure modes require immediate manual intervention with no clear recovery path. The gap between the claim and the recovery experience is where trust breaks.

Observed Example

The platform markets end-to-end execution. When the AI stalls mid-task, there is no automatic retry logic, no credit pause, and no clear escalation path for the user.

Support Deflection Loop

Issues are publicly acknowledged but privately redirected. The support architecture is designed to absorb complaint volume without generating visible resolution. Each step in the loop — DM, ticket, email, wait — reduces the probability of resolution while maintaining the appearance of responsiveness.

Observed Example

Every public complaint thread receives the same response: "DM me your email." No public closure is ever posted. The thread goes quiet. The problem remains.

Private Resolution Sink

Problems are moved into private channels — DMs, support tickets, email threads — where they become invisible to other users and unaccountable to the public. The company controls the narrative by controlling the venue.

Observed Example

When billing complaints accumulate on the subreddit, the official response is to announce that all billing posts will be removed and redirected to private DMs. The public record is sanitized.

Refund Gatekeeping

Refund eligibility is defined so narrowly that most real-world dissatisfaction falls outside its boundaries. The policy excludes 'non-technical dissatisfaction,' 'unclear prompts,' and 'ambiguous outcomes' — which describes the majority of AI failure scenarios.

Observed Example

A user's task produces incorrect output after consuming 6,000 credits. Support denies the refund because the failure cannot be classified as a 'verifiable technical issue' — the AI simply performed poorly.

Cost Opacity

Users cannot estimate credit consumption before execution begins. There is no pre-task cost estimate, no warning threshold, and no pause mechanism when credits run low mid-task. Financial uncertainty is baked into the product experience.

Observed Example

A user asks for a trip planning PDF. The task runs for 30 minutes, consumes credits equivalent to $10, and delivers an incomplete output. The user had no way to know the cost before starting.

The following pattern operates above the product layer. It describes a structural property of the venture-backed AI SaaS model, not a specific feature failure.

Churn Delay by Design

The deliberate or emergent use of credit lock-in, private resolution channels, and support containment to delay the visibility of user dissatisfaction on company dashboards. Churn metrics remain stable while trust degrades. By the time cancellation registers, the damage is already irreversible. This is not a product failure — it is a structural property of venture-backed AI SaaS operating under churn-sensitive investor pressure.

Observed Example

A user loses credits to a failed task, hits a support loop, reduces usage over six weeks, and finally doesn't renew at billing cycle end. The dashboard shows stable retention until the last moment. The trust was gone at week one.

System Mechanics

The Incentive Architecture

Who Benefits. And How.

The primary beneficiary of this structure is the company's short-term revenue. Usage-based billing means every credit consumed is revenue recognized — whether the task succeeded or failed. The company's financial model does not distinguish between a successful execution and a failed loop. Both are billable events.

The secondary beneficiary is the support function itself. By deploying a community manager to publicly acknowledge complaints and redirect them to private channels, the company achieves the optics of accountability without the cost of accountability. The community manager's role is not resolution — it is containment. The function is to prevent complaints from aggregating into visible churn signals that would affect acquisition metrics.

The investor context matters here. In venture-backed SaaS, churn is the metric that most directly affects valuation. A platform that can suppress visible churn — by moving complaints into private channels, by making cancellation difficult, by auto-renewing subscriptions at higher tiers — can maintain the appearance of strong retention even as the actual user experience deteriorates. The system is not optimizing for user success. It is optimizing for the metrics that determine the next funding round.

Signal Scorecard

The VC System

The Real Story

This Isn't a Product Problem.

It's a System Problem.

To understand why Manus behaves the way it does under failure, you have to go back further than the product. You have to go back to the intellectual framework that shaped how software companies are built, measured, and monetized — and is now colliding with AI in ways that nobody has fully accounted for.

The foundation runs through firms like Benchmark Capital and people like Bill Gurley. Gurley's thesis was brutally simple: churn kills companies. If users don't stay, nothing else matters. He spent years warning founders that they were lying to themselves with lifetime value calculations, masking weak fundamentals behind growth metrics, and ignoring the one variable that actually determines survival — whether customers keep coming back.

That philosophy created discipline. It forced companies to care about retention, product quality, and long-term value. But it also created a pressure system. Once churn becomes the defining metric, everything else begins to orbit around it. And over time, companies don't just try to reduce churn — they try to manage how churn is measured, perceived, and delayed.

The Inversion

TRADITIONAL SAAS MODEL

Build a product that works reliably, then monetize.

AI SAAS MODEL (NOW)

Monetize usage while the product is still probabilistic, and manage the fallout.

Then the model scaled. Firms like Andreessen Horowitz industrialized venture capital — layering in distribution, media, and narrative control. Sequoia doubled down on speed and category dominance. The result wasn't a different philosophy; it was the same philosophy operating at much higher velocity. Growth accelerated. Competition intensified. Perception started to matter as much as fundamentals.

For a while, that system worked because SaaS products were deterministic. Software either functioned or it didn't. Bugs could be identified, fixed, and patched. You could align pricing with outcomes because outcomes were predictable. Then AI broke that.

Agent-based systems like Manus don't produce guaranteed results. They produce probabilistic ones. Most of the time they work well enough to feel impressive. But at the edges — where real work gets done — they fail in ways that are ambiguous. Not clean bugs, but partial failures, misinterpretations, loops, degraded outputs. That creates a new problem: how do you monetize something that doesn't reliably succeed?

The current answer is: you don't wait for reliability. You monetize usage. The credit system converts execution into revenue regardless of outcome. Every action has a cost. Every attempt generates value for the platform. And because the system is inherently probabilistic, a large percentage of failures fall into categories that are not clearly refundable — not because anyone is hiding it, but because the rules are written that way.

Layer in support, and the picture completes itself. At scale, support is a cost center. The more complex the product, the more expensive true resolution becomes. So systems evolve toward containment: acknowledge the issue, redirect to private channels, minimize public escalation, handle each case individually without creating visible precedent. From the outside, it looks responsive. From the inside, it's a loop.

No single actor is doing anything irrational. Venture firms are optimizing for returns. Founders are operating under pressure to scale. Platforms are managing cost and complexity. But together, they create a structure where the easiest path is not to eliminate failure — it's to absorb it without letting it fully register. Churn hasn't materialized yet. It's being delayed. And that delay is the product.

The Pressure Stack

VENTURE FIRMS

Demand growth metrics, retention signals, and path to liquidity. Churn is the kill signal.

FOUNDERS

Operate under burn pressure. Monetize fast. Show usage. Manage churn optics before fundamentals catch up.

PLATFORM

Maximize revenue per execution. Minimize refund liability. Route support to lowest-cost resolution path.

USER

Absorbs execution risk. Pays for failed outcomes. Navigates support loops with no public accountability.

Gurley's Warning, Inverted

"Churn kills companies. If users don't stay, nothing else matters."

— Bill Gurley, Benchmark Capital

The new generation didn't ignore this. It learned to delay and disguise churn — through credit lock-in, support containment, and private resolution channels. The metric looks stable. The trust is gone.

Where the Fallout Goes

The Resolution

Either the products become reliable enough that deterministic billing makes sense — or the model changes to absorb more of the risk.

Right now, neither has happened.

Churn Delay Timeline

The Gap Between What Users Feel and What Dashboards Show

Failure Event

Task fails. Credits consumed.

Support Loop

DM → Ticket → Wait

Trust Erosion

User hesitates.

Behavioral Withdrawal

Usage drops quietly.

Billing Cycle Ends

User doesn't renew.

Dashboard Signal

Metric moves.

Invisible to Dashboard

Hesitation, reduced usage, and behavioral withdrawal never appear as churn. The metric looks stable.

The Delay Window

The gap between first failure and cancellation can span weeks to months. Revenue continues. Trust does not.

Lagging Signal

By the time churn registers on a dashboard, the trust damage is already irreversible. The product lost the user long before the metric moved.

The Exit

Benchmark. Meta. Eight Months.

Who Cashed Out.

And Who Was Left.

In April 2025, Benchmark led a $75 million round into Manus at a $500 million valuation. Based on standard deal mechanics for a lead of that size, Benchmark wrote roughly a $60 million check and took approximately 10–12% of the company on a fully diluted basis. The previous total raised was just over $10 million from Tencent, ZhenFund, and HSG. In less than a year, Manus had gone from a small seed-stage bet to the most-talked-about AI agent on the internet.

By December 2025, Meta acquired Manus outright. Multiple outlets reported the deal at "more than $2 billion," with some figures closer to $2.5 billion. Applied to Benchmark’s estimated stake, that’s a rough payoff of $200–300 million on a $60 million check — in eight months. The kind of return that makes LP slides write themselves.

Once the acquisition closes, the story shifts. Meta doesn’t do half-measures: it’s buying 100% of Manus and folding it into its broader AI strategy. The company has emphasized there will be “no continuing Chinese ownership interests” in Manus — meaning Tencent, HSG, ZhenFund, and all other early backers are fully cashed out alongside Benchmark. From that point on, the real decisions — pricing, refund policies, credit mechanics, what happens to existing subscribers — are made inside Meta, not in a startup boardroom.

Now connect this to the ARR mechanics. Manus monetizes at the point of execution. Every task consumes credits. Every attempt — successful or not — generates revenue. In traditional SaaS, ARR grows as users stay. In this model, ARR grows as users try. That distinction is the entire story. Revenue is captured immediately, not after value is proven. Users who sign up, experiment, run multiple tasks, and burn through credits — even when half those attempts fail — still register as strong usage and revenue. Multiply that across thousands of users and ARR ramps extremely quickly.

This is how you get to explosive ARR numbers in record time. Not through retention — but through high-intensity early usage. Lovable hit ~$100M ARR in eight months. Replit in eight months. Cursor in twelve. Emergent in eight. The instinct is to call this product-market fit. It is, in part. But it’s also mechanics. These systems naturally generate more usage because they’re open-ended. Users don’t just click — they iterate, retry, experiment. Every one of those actions is billable. So ARR accelerates before the retention question is even fully asked.

Gurley’s framework was built on the idea that durable companies come from real retention, not engineered metrics. The new AI SaaS model allows companies to monetize before outcomes stabilize, capture revenue during experimentation, and delay the visibility of churn. From the outside, it looks like explosive growth. From the inside, it’s a system where revenue leads, trust lags, and churn hasn’t fully materialized yet. Manus didn’t break the rules. It exposed the new ones. And Benchmark cashed out before anyone had to answer for them.

The Structural Inversion

Old SaaS

New AI SaaS

“The real question isn’t: how did they grow so fast? It’s: what happens when growth stops hiding the friction?”

The Benchmark Bet

The Meta Exit

$100M ARR Race

All hit ~$100M ARR. None through retention alone.

Methodology

How This Was Built

Pattern Extraction From Distributed Reality

This is not research in the conventional sense. It is pattern extraction from distributed reality — the process of pulling together user experience signals, hard system rules, and observed behavior, then comparing them against each other until the contradictions become unavoidable.

Most analysis collects information and explains it. This methodology collects contradictions and names them. The distinction matters because named patterns have leverage. Once a pattern has a name — "Credit Risk Transfer," "Support Deflection Loop" — it becomes recognizable across companies, across products, across time. The name is the tool.

Layer 1

Surface Signal

Reddit threads, public complaints, repeated phrasing, emotional spikes. This is where systems break in public.

Layer 2

System Truth

Pricing pages, credit rules, refund policies, usage terms. This is what the system actually allows.

Layer 3

Behavior Layer

Moderator replies, support tone, resolution vs. deflection. This is what the company actually does.

Layer 4

Synthesis

Compare claim vs. behavior. Identify repetition. Define patterns. Only patterns confirmed across multiple independent sources count.

Five Questions

Every analysis in this index answers only these:

- 1.What is the system claiming?

- 2.What actually happens?

- 3.What repeats?

- 4.Who benefits?

- 5.What do we call this pattern?

Data Sources

— r/ManusOfficial (200+ threads)

— Trustpilot reviews (pages 1–4)

— Manus Help Center (billing articles)

— Manus Terms of Service

— Manus pricing documentation

— VC ecosystem: Benchmark, a16z, Sequoia

— Gurley churn doctrine (public record)

Timeline

March 2025 — December 2025

Nine Months.

From Demo to Exit.

The full arc: viral launch, venture capital, explosive revenue, user complaints, and a $2B+ acquisition. Each event is annotated with its structural significance.

Early 2024

Manus Founded

Monica AI team pivots to build an autonomous AI agent. Initial funding from Tencent, ZhenFund, and HSG (formerly Sequoia China). Total raised: ~$10M.

March 2025

Viral Demo Launch

Manus releases a demo video that goes viral globally. Waitlist explodes. Positioned as the world's first truly autonomous AI agent. The hype cycle begins.

March–April 2025

Credit System Complaints Begin

First wave of Reddit posts documenting credit drain, failed tasks, and denied refunds. The r/ManusOfficial subreddit becomes a complaint log. Support deflection pattern established.

April 2025

Benchmark Leads $75M Round

Benchmark invests ~$60M, taking ~10–12% of the company at a $500M valuation. Manus's valuation quintuples overnight. The exit clock starts.

Mid 2025

$100M ARR Milestone

Manus reaches ~$100M ARR in under eight months. Revenue driven by high-intensity early usage, not retention. Every failed task still generates billable credit consumption.

Mid–Late 2025

Subreddit Censorship Reported

Users document that complaint posts are being removed from r/ManusOfficial. The Private Resolution Sink pattern is now operating at the platform level. Public accountability is actively suppressed.

Late 2025

Support Goes Silent

Multiple Reddit threads document human support becoming unresponsive. Automated responses replace human review. Refund denial rate climbs. Trust erosion accelerates.

December 2025

Meta Acquires Manus for $2B+

Meta acquires 100% of Manus. No continuing Chinese ownership. Benchmark exits at an estimated 3–5x return. The founding team exits. All future decisions — pricing, refunds, user data — now belong to Meta.

Conclusion

The Defining Factor

Trust Is Not a Soft Metric. It Is the Only Metric That Survives.

The failure patterns documented here do not kill products immediately. They kill them slowly, through the accumulation of small betrayals. A user who loses 6,000 credits to a failed task and receives a denial from an AI-powered refund reviewer does not cancel that day. They finish the billing cycle. They try again. They tell two colleagues. They post on Reddit. They move to a competitor when one becomes available. The damage is invisible on the dashboard until it is irreversible.

This is structural, not accidental. The patterns identified here — Credit Risk Transfer, Autonomy–Reality Gap, Support Deflection Loop, Private Resolution Sink, Refund Gatekeeping, Cost Opacity, Churn Delay by Design — are not the result of a bad quarter or an understaffed support team. They are the emergent properties of a venture-backed system optimized for revenue capture and churn containment at the expense of user trust. The VC pressure stack — investor demand for growth metrics, founder pressure to monetize fast, platform incentives to minimize refund liability — produces these patterns as outputs, not accidents.

The Meta acquisition is the final step in that cycle — and the most clarifying one. In December 2025, Meta acquired Manus at a valuation of more than $2 billion. Benchmark walked away with an estimated 3–5x return on an eight-month investment. The founding team cashed out. Every VC on the cap table cashed out. And the users who had been posting on Reddit about lost credits, denied refunds, and unresponsive support were left holding subscriptions to a product now controlled by one of the largest advertising companies on earth. The accountability that never existed under the startup was not transferred — it was dissolved.

The irony is that Manus AI's technology is genuinely impressive. The product works. When it works, it works better than most alternatives. That capability is what makes the surrounding system so damaging — because users who experience the product at its best become the most motivated advocates, and users who experience the billing and support system at its worst become the most motivated critics. The gap between those two experiences is not a product problem. It is a trust architecture problem. And that architecture was never designed to serve users. It was designed to produce an exit.

AI agents are being sold as autonomous systems. Their failure modes are still handled like low-tier SaaS support. Until that changes — until the billing system reflects the probabilistic nature of AI execution, until refund policies acknowledge that "the AI performed poorly" is a legitimate failure condition, until support resolves issues publicly rather than sinking them into private channels — the trust gap will widen. The venture ecosystem that built this structure warned itself, through Gurley and others, that churn is the kill signal. What it didn't account for is that in AI, trust erodes before churn registers. Users don't cancel. They hesitate. They reduce usage. They stop relying on the system for critical work. That behavioral shift — invisible on a dashboard, irrelevant to an acquirer — is the actual failure mode. Manus didn't break the rules. It completed the playbook.

Pattern Index — Case Study 001 Complete

"A SaaS company doesn't fall because it changed a policy. It falls because it tries to hide the consequences."

— Observed in r/ManusOfficial, November 2025

Next Case Study

Coming Soon

Patterns Defined

7

incl. 1 system-level pattern

Sources Cross-Referenced

7